Senior Cloud Solution Architect

Amazon Redshift can be considered to be one of the most important data warehouses currently and AWS offers it in its cloud. Working at Bluetab, we have had the pleasure of using it many times during our good/bad times as well as this year 2020. So we have created a list with the most common errors you will need to avoid and we hope this will be a great aid for you.

At Bluetab we have been working around data for over 10 years. In many of them, we have helped in the technological evolution of numerous companies by migrating from their traditional Data Warehouse analytics and BI environments to Big Data environments.

Additionally, at Cloud Practice we have been involved in cloud migrations and new developments of Big Data projects with Amazon Web Services and Google Cloud. All this experience has enabled us to create a group of highly qualified people who think/work in/for the cloud

To help you with your work in the cloud, we want to present the most common mistakes we have found when working with Redshift, the most important DW tool offered by AWS.

Here is the list:

Amazon Redshift is a very fast, cloud-based analytical (OLAP) database, fully managed by AWS. It simplifies and enhances data analysis using standard SQL compatible with most existing BI tools.

The most important features of Amazon Redshift are:

Amazon Redshift is not the same as other SQL database systems. Good practices are required to take advantage of all its benefits, so that the cluster will perform optimally.

A very common mistake made when starting to use Redshift is to assume that it is simply a vitamin-enriched PostgreSQL and that you can start working with Redshift based on a schema compatible with that. However, you could not be more wrong.

Although it is true that Redshift was based on an older version of PostgreSQL 8.0.2, its architecture has changed radically and has been optimised over the years to improve performance for its strictly analytical use. So you need to:

When designing the database, bear in mind that some key table design decisions have a considerable influence on overall query performance. Some good practices are:

DISTKEY type. This will distribute the data to the various nodes grouped by the chosen key values. This will enable you to perform JOIN type queries on that column very efficiently.ALL type. It is advisable to copy those tables commonly used in joins of dictionary type to all the nodes. In that way the JOIN statement with much bigger fact tables will execute much faster.EVEN type. The data will be distributed randomly in this way.As Redshift is a distributed MPP environment, query performance needs to be maximised by following some basic recommendations. Some good practices are:

SELECT *. and include only the columns you need.WHERE statement to restrict the amount of data to be read.GROUP BY and SORT BY clauses so that the query planner can use more efficient aggregation.Loading very large datasets can take a long time and consume a lot of cluster resources. Moreover, if this loading is performed inappropriately, it can also affect query performance.

This makes it advisable to follow these guidelines:

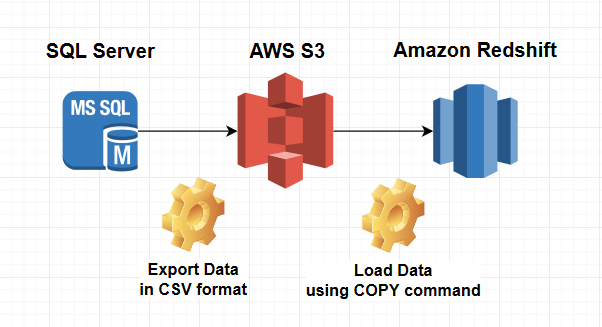

Always use the COPY command to load data in parallel from Amazon S3, Amazon EMR, Amazon DynamoDB or from different data sources on remote hosts.

copy customer from 's3://mybucket/mydata' iam_role 'arn:aws:iam::12345678901:role/MyRedshiftRole'; If possible, launch a single command instead of several. You can use a manifest file or patterns to upload multiple files at once.

Split the load data files so that they are:

To update data and insert new data efficiently when loading it, use a staging table.

-- Create an staging table and load it with the data to be updated

create temp table stage (like target);

insert into stage

select * from source

where source.filter = 'filter_expression';

-- Use an inner join with the staging table to remove the rows of the target table to be updated

begin transaction;

delete from target

using stage

where target.primarykey = stage.primarykey;

-- Insert all rows from the of the staging table.

insert into target

select * from stage;

end transaction;

-- Drop the staging table.

drop table stage; Over the years we have seen many customers who had serious performance issues with Redshift due to design failures in their databases. Many of them had tried to resolve these issues by adding more resources to the cluster rather than trying to fix the root problem.

Due to this, I suggest you follow the flow below to dimension your cluster:

Collect information on the type of queries to be performed, data set size, expected concurrency, etc.

Design your tables based on the queries that will be made.

Select the type of Redshift instance (DC2, DS2 or RA3) depending on the type of queries (simple, long, complex…).

Taking the data set size into account, calculate the number of nodes in your cluster.

# of Redshift nodes = (uncompressed data size) * 1.25 / (storage capacity of selected Redshift node type) « For storage size calculation, having a larger margin for performing maintenance tasks is also recommended »

Perform load tests to check performance.

If it does not work adequately, optimise the queries, even modifying the design of the tables if necessary.

Finally, if this is not sufficient, iterate until you find the appropriate node and size dimensioning.

It is quite likely that your use case will require multiple sessions or users running queries at the same time. In these cases, some queries can consume cluster resources for extended periods of time and affect the performance of the other queries. In this situation, simple queries may have to wait until longer queries are complete.

By using WLM, you will be able to manage the priority and capacity of the different types of executions by creating different execution queues.

You can configure the Amazon Redshift WLM to run in two different ways:

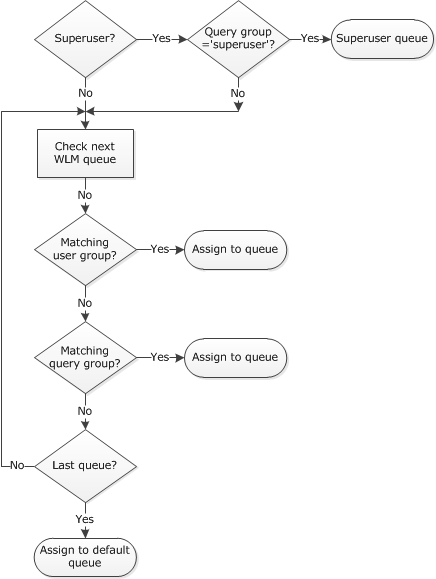

When a user runs a query, WLM assigns the query to the first matching queue, based on the WLM queue assignment rules.

Database maintenance is a term we use to describe a set of tasks executed with the intention of improving the database. There are routines to help performance, free up disk space, check data errors, check hardware faults, update internal statistics and many other obscure (but important) things.

In the case of Redshift, there is a mistaken feeling that as it is a service fully managed by Amazon, there is no need for any. So you create the cluster and forget about it. While AWS makes it easy for you to manage numerous tasks (create, stop, start, destroy or perform back-ups), this could not be further from the truth.

The most important maintenance tasks you need to perform in Redshift are:

VACUUM and it needs to be run manually to be able to use SORT KEYS of the INTERLEAVED type. This is quite a long and expensive process that will need to be performed, if possible, during maintenance windows.STV_LOAD_STATE where you can find information on the current status of the COPY instructions in progress. You should check them often to confirm that there are no data integrity errors.STL_ALERT_EVENT_LOG or through the AWS web console itself.My name is Álvaro Santos and I have been working as Solution Architect for over 5 years. I am certified in AWS, GCP, Apache Spark and a few others. I joined Bluetab in October 2018, and since then I have been involved in cloud Banking and Energy projects and I am also involved as a Cloud Master Partitioner. I am passionate about new distributed patterns, Big Data, open-source software and anything else cool in the IT world.